The "experts" are clutching their pearls again. Every time a government mentions a social media ban for minors, a predictable wave of pushback hits the cycle. You’ve heard the refrain: it’s a "lazy fix," it’s "impossible to enforce," or it "stifles digital literacy." This consensus isn't just wrong; it’s a coordinated defensive crouch by an industry that has spent two decades treatng your child’s attention as a commodity.

The argument that bans are "lazy" is the ultimate gaslighting. It suggests that complex, algorithmic manipulation—designed by the brightest minds in behavioral science to keep dopamine loops firing—can be countered by a few "family conversations" or a generic school module on internet safety. It’s like telling a parent to teach their kid "moderate nicotine literacy" while Philip Morris installs a vending machine in the bedroom. Discover more on a connected issue: this related article.

We need to stop pretending this is a debate about education. This is a debate about architectural harm.

The Digital Literacy Myth

The most common objection to bans is that we are robbing children of the chance to "learn the ropes." This premise assumes that social media is a tool, like a hammer or a spreadsheet. It isn’t. More reporting by TechCrunch delves into similar views on this issue.

Modern social platforms are asymmetric psychological environments.

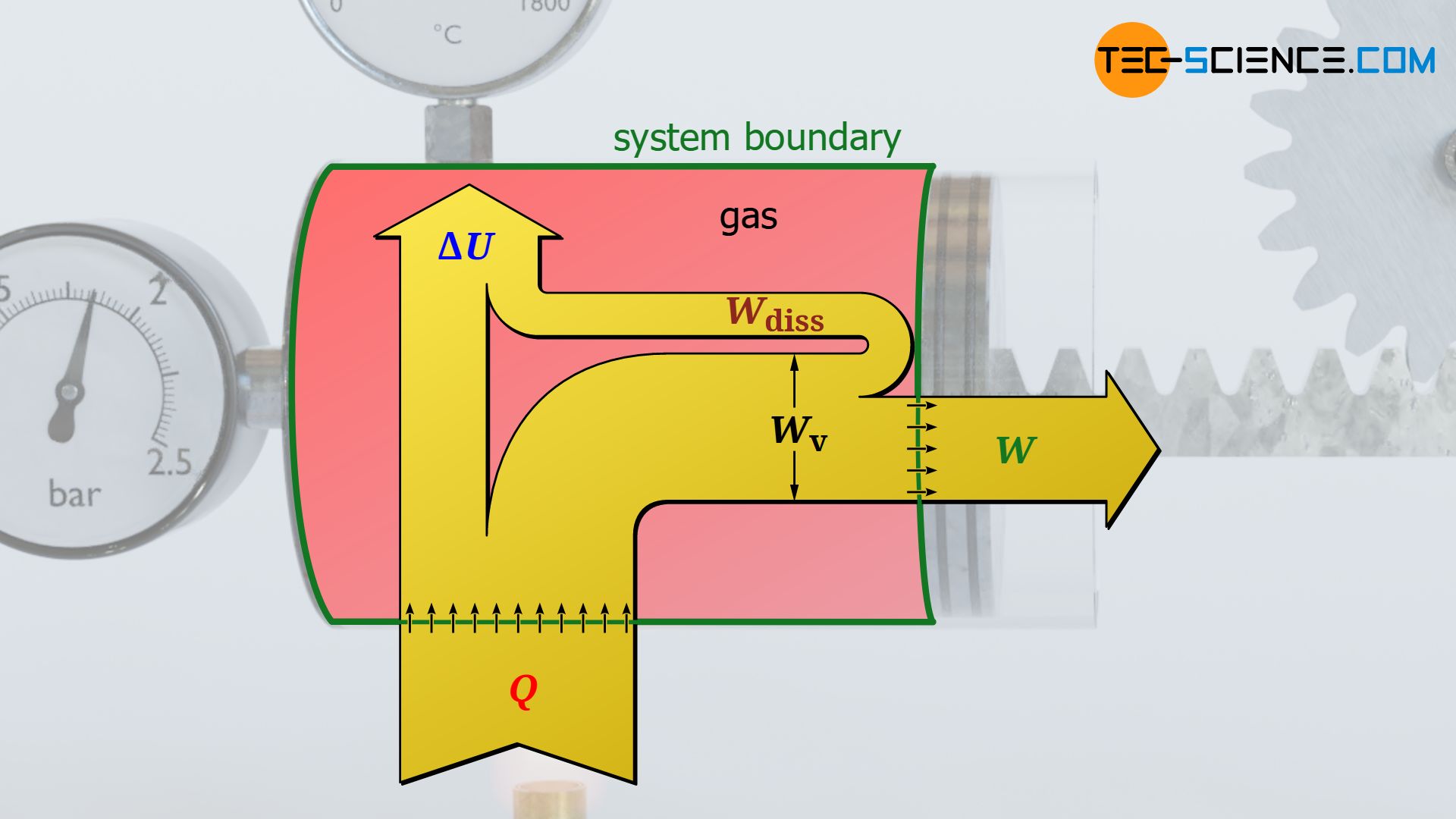

On one side, you have a 13-year-old with a developing prefrontal cortex—the part of the brain responsible for impulse control and long-term planning. On the other side, you have a $500 billion corporation utilizing $display$ $\text{Reinforcement Learning from Human Feedback (RLHF)}$ $display$ to optimize for engagement. The math is rigged. When the algorithm identifies a vulnerability—whether it’s body dysmorphia or radicalization—it doesn't "educate" the user. It exploits the node.

I’ve spent years watching tech companies "self-regulate." It’s a theater of shadows. They add a "screentime reminder" buried five menus deep while simultaneously shortening video loops to six seconds to maximize the "swipe" reflex. Calling a ban "lazy" ignores the reality that the current "active" solution—parental controls—is a catastrophic failure.

The Enforcement Fallacy

Critics love to point out that kids will use VPNs or fake birthdays to bypass bans. "If they can get around it, why bother?"

This logic is intellectually bankrupt. We have age restrictions on alcohol, tobacco, and driving. Do some kids find a way to get a bottle of vodka? Of course. Does that mean we should remove the age floor for liquor stores and replace it with a "responsible drinking" app?

The goal of a ban isn't 100% eradication; it’s the destruction of the network effect.

Social media is a utility of social necessity. Teens aren't on TikTok because they love the interface; they’re there because everyone else is there. By implementing a legal ban, you shift the default. You give parents the ultimate "out." When a child says, "But everyone else is on it," a parent can finally respond, "Actually, it’s illegal, and the app won’t even let you register without a verified ID."

It moves the burden of proof from the parent to the platform.

The False Equivalence of Digital Connection

We are told that bans will "isolate" marginalized youth. This is the "human rights" shield that Big Tech loves to hide behind. It is a cynical use of vulnerable populations to protect a business model built on data extraction.

Let’s be clear: Digital connection is not a substitute for physical community. In fact, $r$, the correlation coefficient between high social media usage and reported loneliness, has shown a persistent positive trend over the last decade.

- The Paradox of Choice: More "friends" leads to shallower investment.

- The Comparison Trap: Real life cannot compete with a curated, filtered, and AI-enhanced feed.

- The Sleep Deficit: The blue light and the notification pings are physically degrading the quality of adolescent rest.

If we were truly worried about isolation, we would be funding third spaces—libraries, parks, and youth clubs—rather than handing our children a digital portal that monitors their every keystroke.

The Economic Reality of the "Lazy" Argument

Why do academics and industry-funded "think tanks" call bans lazy? Because a ban is the only thing that actually hurts the bottom line.

Design changes, "educational" pop-ups, and "well-being" dashboards are all things the industry can absorb. They are performance art. A ban, however, stops the data pipeline at the source. It prevents the brand-loyalty encoding that happens between the ages of 11 and 16.

If you want to see a "pivotal" shift in corporate behavior, don't ask for a better algorithm. Cut off the supply of users.

The Hard Truth About Parental Responsibility

There is a segment of the population that hates bans because it forces a confrontation with our own failures. We used these devices as digital pacifiers. We liked the quiet they provided at the dinner table.

Calling a ban "lazy" is a projection. The lazy path was allowing a generation to be raised by an unvetted, profit-driven algorithm because it was convenient. The hard, "non-lazy" path is the legislative battle to claw back our children's cognitive development from companies that see them as nothing more than a series of data points.

The Mechanics of Effective Prohibition

If we are going to do this, we have to stop half-measures. A "ban" that relies on a "I am 18" checkbox is a joke. Real prohibition requires:

- Hardware-Level Verification: Shifting the verification to the OS or the ISP level, not the app level.

- Statutory Damages: Making the fine for hosting a minor higher than the projected Lifetime Value (LTV) of that user.

- The End of Anonymity for Minors: If you want to play in the digital commons, you need a verified, age-gated identity.

Yes, this raises privacy concerns. Every solution does. But we are currently trading the mental health of an entire generation for the "privacy" of being tracked by five hundred different ad-tech scripts. It’s a bad trade.

The Missing Nuance: It’s Not About Content

The "experts" often argue that we should focus on "harmful content" rather than the platforms themselves. This is a fundamental misunderstanding of the medium.

The medium is the harm.

The infinite scroll, the intermittent reinforcement of the "like" button, and the algorithmic curation are the problem. You could fill Instagram with nothing but puppy photos and it would still be a psychological meat grinder because of the mechanics of delivery.

We aren't banning "information." We are banning a delivery system that is incompatible with the neurological development of a child.

The Inevitability of the Backlash

Expect the "experts" to cite studies showing "inconclusive evidence" of harm. This is a classic play from the Lead Paint and Big Tobacco playbook. When the variable is "the entire way a generation communicates," isolating a single cause-and-effect relationship in a longitudinal study is nearly impossible.

But we don't need a ten-year peer-reviewed study to see the house is on fire. We see it in the ER visits for self-harm. We see it in the plummeting attention spans in classrooms. We see it in the total erosion of the "unmonitored" childhood.

A ban isn't a silver bullet. It’s a firebreak. It’s a way to stop the spread of a contagion while we figure out how to rebuild the forest.

The people calling it "lazy" are usually the ones profiting from the status quo or too terrified to admit that the digital world we built is toxic to the people we are supposed to protect. Stop listening to the "digital literacy" advocates. They are teaching children how to survive in a burning building instead of just putting out the fire.

The most radical thing you can do for a child in 2026 isn't giving them a "safer" app. It’s giving them their childhood back, offline, where the algorithm can’t find them.

Delete the apps. Pass the laws. Take the hit. The kids will thank you when they finally wake up.