The transition toward an economy underpinned by artificial intelligence is frequently mischaracterized as a simple software upgrade. In reality, it represents a structural shift in how capital is deployed across the enterprise stack. Sundar Pichai’s recent assertions regarding the "AI shift" and startup investment reflect a broader acknowledgment: we are moving from a period of high-margin software distribution to a regime defined by high-intensity compute orchestration. This shift creates a vacuum in traditional venture models while simultaneously opening high-alpha opportunities for those who understand the three-tier architectural evolution of the AI market.

The Infrastructure Bifurcation

The capital requirements for foundational model development have reached a scale that excludes 99% of traditional venture-backed startups. This creates a hard ceiling at the infrastructure layer. We can map the current investment opportunity not by sector, but by their position relative to the "Compute Moat."

- The Model Layer (CapEx Intensive): Dominated by incumbents and hyperscalers. The cost of training state-of-the-art (SOTA) models is scaling at an order of magnitude that requires a new form of "sovereign" or "corporate" venture capital. Startups here are essentially R&D labs for future acquisitions.

- The Middleware Layer (Orchestration): This is where the first real investment opportunity for non-hyperscalers exists. This layer focuses on the "Inference Gap"—the distance between a raw model's capability and a production-ready application.

- The Application Layer (Contextual Density): The most fertile ground for startups. Success here is not determined by the underlying LLM (Large Language Model), but by the proprietary nature of the data loops and the "System of Record" status the software achieves.

The Unit Economics of Intelligence

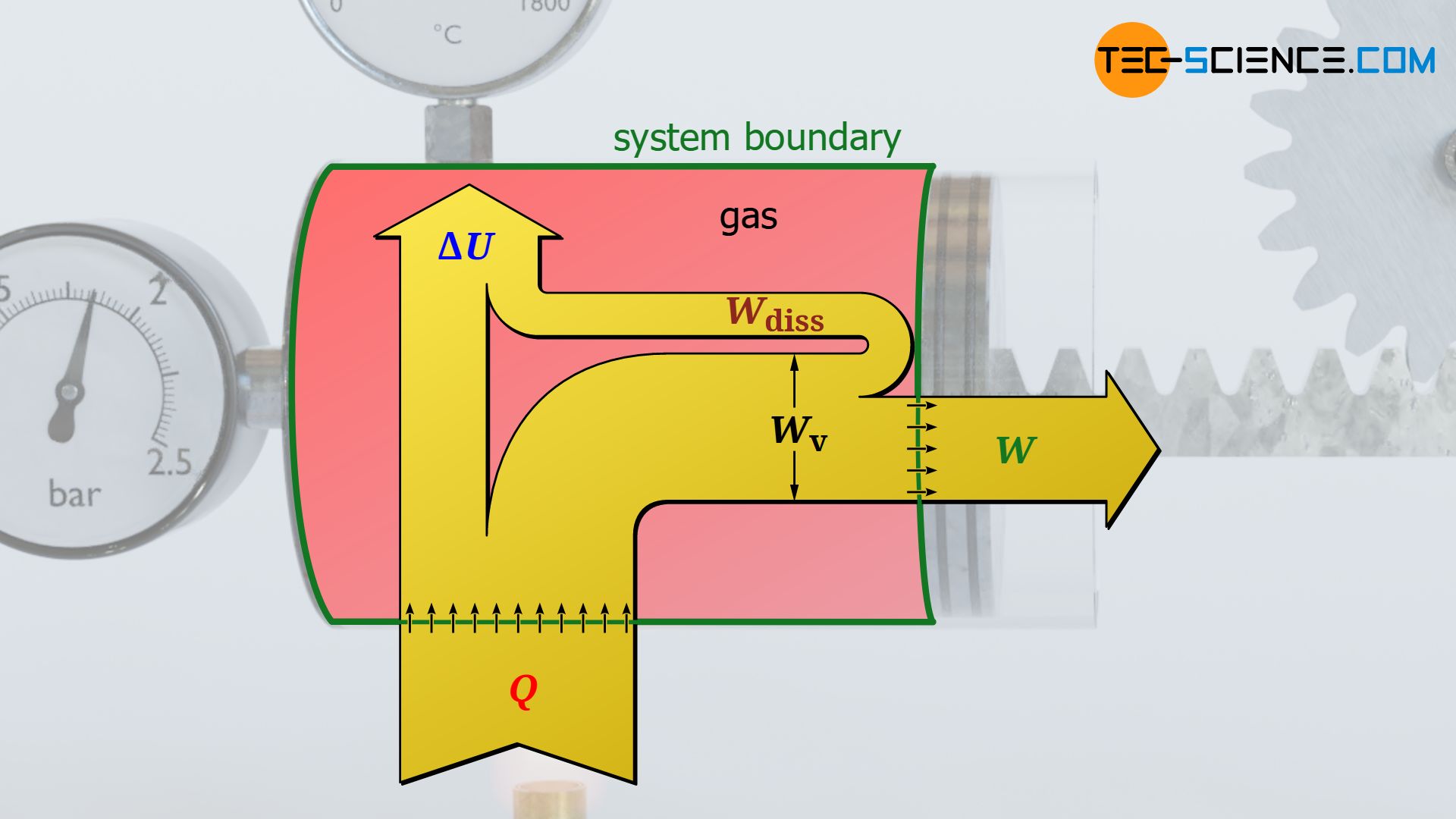

The fundamental misunderstanding in current AI discourse is the failure to distinguish between training costs and inference costs. Traditional SaaS enjoyed marginal costs approaching zero. AI-native companies face a variable cost structure tied directly to token usage and GPU hours. This creates a "Gross Margin Compression" that most early-stage investors have yet to price in.

To evaluate a startup in this space, one must apply the Inference Efficiency Ratio. This measures the value generated per dollar of compute spent. If a startup is merely wrapping a third-party API without adding a layer of proprietary logic or data caching, their moat is non-existent. They are effectively reselling a commodity with a shrinking margin.

The Logic of the "Wrapper" Fallacy

Critics often dismiss application-layer startups as "GPT wrappers." This is a shallow analysis. The value in the AI shift is not in the model; it is in the Contextual Integration.

Consider the difference between a general-purpose model and a specialized tool for structural engineering. The general model understands the laws of physics in a broad sense. The specialized tool, however, integrates with CAD software, regulatory databases, and real-time sensor data. The value is generated by the integration, not the underlying reasoning engine. Pichai’s focus on startup investment signals that Google recognizes its own models are commodities; the real profit lies in the specific, high-friction use cases that only agile startups can solve.

Structural Bottlenecks and the Talent Arbitrage

The "AI shift" is currently hitting a bottleneck that traditional capital cannot solve: the scarcity of high-tier talent capable of performing Architectural Optimization.

We are seeing a shift from "Full-Stack Developers" to "Inference Architects." These are individuals who can minimize the latent cost of model deployment while maximizing output accuracy. Large incumbents like Google or Microsoft have the capital to hoard this talent, but they suffer from "Institutional Inertia." A startup’s primary advantage is not its technology, but its Decision Velocity.

In a regime where SOTA models are updated every six months, a large corporation's two-year product roadmap is a liability. Startups that can pivot their architecture to utilize the most efficient model at any given moment—regardless of the provider—will capture the "Efficiency Alpha."

The Displacement of Legacy Software

The investment opportunity Pichai identifies is largely a replacement cycle. The first wave of enterprise software was about digitization (moving paper to screen). The second wave was about connectivity (Cloud/SaaS). This third wave is about Autonomy.

Legacy software requires a human to input data and a human to interpret output. AI-native software performs the "Labor of Interpretation." For investors, the target should be companies that eliminate internal workflows rather than those that simply make them more efficient.

- Fact: Legacy SaaS seats are being challenged by "Agentic Workforces."

- Hypothesis: Total Contract Value (TCV) in the next five years will shift from per-seat pricing to value-based or outcome-based pricing models.

This shift in pricing architecture is a massive opportunity for startups to underbid incumbents who are trapped in per-user licensing models.

Risk Profiles and the "Death of the Generalist"

The most significant risk in the current startup ecosystem is Model Obsolescence. If a startup spends 18 months building a feature set that is integrated into the next version of Gemini or GPT as a native capability, that startup is effectively bankrupt.

To mitigate this, sophisticated investors are looking for Defensible Data Loops.

- User Action: The user interacts with the AI.

- Reinforcement: The system records the human's correction or acceptance of the AI's output.

- Refinement: This specific interaction data—which Google or OpenAI does not have access to—is used to fine-tune a smaller, more efficient local model.

This "Local Flywheel" creates a moat that even the most powerful foundational models cannot breach because the foundational model lacks the specific, private context of the user’s workflow.

The Geometry of the Market Shift

The move toward AI-driven investment isn't just about "innovation"; it’s about the Deflation of Intelligence. As the cost of a "unit of reasoning" drops toward zero, the value of that reasoning also drops unless it is applied to a high-value problem.

We can visualize this as a shifting frontier:

- Low Complexity / Low Context: Fully commoditized (e.g., email drafting, basic coding). No startup opportunity.

- High Complexity / Low Context: Dominated by Big Tech (e.g., scientific research, weather modeling). High CapEx, low startup feasibility.

- Low Complexity / High Context: Strong startup potential (e.g., hyper-personalized consumer apps, niche automation).

- High Complexity / High Context: The "Gold Mine" (e.g., legal discovery, medical diagnostics, supply chain optimization). This is where the "AI shift" creates generational companies.

Operational Realities of the New Venture Model

Investors must move away from evaluating "Growth at All Costs" and toward "Compute-Adjusted Growth." A company growing 100% year-over-year while its compute bills grow 150% is a failing business.

The successful AI startup of the 2026-2030 era will look more like a specialized utility than a traditional software company. It will require:

- Hybrid Infrastructure: Using SOTA models for complex reasoning and distilled, smaller models for routine tasks to preserve margins.

- Data Sovereignty: Ensuring that the value created by user data stays within the startup's ecosystem, rather than being "leaked" to the model providers.

- Vertical Integration: Owning the entire stack of a specific industry problem, from the user interface down to the fine-tuned model weights.

Strategic Execution for the Next Cycle

The immediate play for capital allocators and founders is the Disaggregation of the Enterprise.

Large, monolithic software suites are vulnerable. They are too slow to integrate AI at the core. The strategy is to identify the most expensive human-in-the-loop process within a Fortune 500 company—be it compliance, procurement, or customer success—and build an agentic system that doesn't just assist the human but replaces the process entirely.

The "AI shift" is not an invitation to build more software; it is a mandate to build more intelligence. The startups that will win are those that treat compute as a precious resource and context as the ultimate defensible asset. The focus must remain on the Unit Cost of Autonomy. If a startup can deliver an autonomous outcome for 1/10th the cost of a human or a legacy software system, the market capture will be total.

The era of the "General Purpose AI" as a business model is over. The era of "Contextually Absolute AI" has begun. Direct your capital toward the friction points where generic intelligence fails, and where proprietary context is the only viable solution.