Capital expenditure among the "Big Three" cloud providers—Microsoft, Google, and Amazon—has decoupled from historical growth patterns, driven by a singular, external forcing function: OpenAI. While market analysis often treats the relationship between OpenAI and tech hyperscalers as a symbiotic growth engine, a structural audit reveals a more precarious dynamic. The current earnings cycle suggests that OpenAI acts as a massive "tax" on cloud margins, forcing a front-loaded infrastructure spend that may not correlate with immediate internal product revenue.

This structural shift can be deconstructed into three primary economic pressures: the GPU Capex Trap, the Cannibalization of Legacy Cloud, and the Sovereign AI Divergence.

The GPU Capex Trap and Depreciation Drag

The financial profile of a hyperscaler traditionally relies on a predictable depreciation schedule for hardware, typically spanning five to six years for servers. The transition to AI-centric infrastructure collapses this model. H100 and B200 GPU clusters require higher power density, specialized cooling, and faster replacement cycles due to the rapid pace of architectural innovation.

- Front-Loaded Capital Intensity: Hyperscalers are currently building data centers not for today’s demand, but to ensure OpenAI and its competitors do not migrate to rival clusters. This creates a "Prisoner’s Dilemma" where the cost of not building outweighs the risk of overcapacity.

- The Margin Squeeze: As OpenAI scales its model parameters, the compute requirements increase non-linearly. Microsoft, as the primary host, must allocate a significant portion of its Azure capacity to a single entity that operates at a different margin profile than traditional enterprise SaaS customers.

- Hardware Heterogeneity: The rush to support OpenAI’s proprietary requirements forces hyperscalers to invest in specific Nvidia-centric stacks, reducing their ability to optimize costs through internal silicon (like Google’s TPU or Amazon’s Trainium) for that specific workload.

The Cannibalization of Legacy Cloud Spend

The "OpenAI effect" is not purely additive to cloud revenue. It represents a fundamental reallocation of enterprise IT budgets. When a Fortune 500 company integrates GPT-4 via API, that spend frequently originates from existing cloud transformation or legacy app modernization budgets.

The mechanism of this cannibalization follows a distinct path:

- Compute Efficiency vs. Volume: LLMs can replace complex, multi-layered legacy software architectures. A single intelligent agent might replace ten traditional microservices. While the unit cost of the LLM call is high, the aggregate "cloud footprint" of the enterprise may shrink or stagnate in non-AI sectors.

- The Wrapper Problem: Thousands of startups are building "wrappers" around OpenAI’s models. These companies have high revenue but low structural "stickiness." If OpenAI absorbs those features into its core model, the hyperscaler loses a diverse ecosystem of small spenders in exchange for one massive, high-leverage customer (OpenAI itself).

The Revenue Recognition Lag

A critical delta exists between the "shovels" (infrastructure build-out) and the "gold" (AI-driven software revenue). While Microsoft has integrated Copilot across its stack, the actual contribution to Azure's growth rate from AI remains a minority percentage compared to traditional cloud services.

The bottleneck is not the technology, but the Enterprise Integration Velocity.

- Data Readiness: Most enterprises lack the clean, vectorized data pipelines necessary to utilize OpenAI’s models at scale.

- Security and Compliance: The time from an initial "OpenAI pilot" to a production-scale deployment is currently averaging 9 to 14 months.

- Inference Costs: Unlike training, which is a one-time capital expense, inference is a recurring operational expense. If the ROI of an AI feature is not immediately apparent, enterprises scale back their API usage, leading to volatility in the hyperscaler's predictable "annuity" revenue model.

Strategic Divergence in the Hyperscaler Response

The hyperscalers are not reacting to OpenAI with a unified strategy. Their internal architectures dictate their defensive postures.

Microsoft: The Proxy Strategy

Microsoft has essentially outsourced its R&D to OpenAI. The risk is "Provider Lock-in." If OpenAI achieves AGI or a significant breakthrough that allows it to operate independently of Azure—or if it seeks to diversify its hardware providers—Microsoft’s massive infrastructure investment becomes a stranded asset. Microsoft's primary countermeasure is the rapid vertical integration of "M365 Copilot" to lock in the user interface layer before the underlying model becomes a commodity.

Google: The Vertical Integration Counter

Google’s response to the OpenAI threat is rooted in its ownership of the full stack: from the TPU (Tensor Processing Unit) to the Gemini model, down to the Android/Workspace distribution channels. Google is betting that the "cost per query" will eventually be the deciding factor in the AI wars. By using internal silicon, they avoid the "Nvidia Tax" that currently plagues Microsoft’s margins.

Amazon: The Neutral Swiss Model

AWS is positioning itself as the marketplace (Bedrock) rather than the partisan. By offering Anthropic, Meta’s Llama, and its own Titan models, Amazon is hedging against the possibility that OpenAI’s dominance is temporary. However, this lack of a "flagship" model on par with GPT-4 in the early stages has led to a perceived loss of momentum in the "AI-driven earnings" narrative.

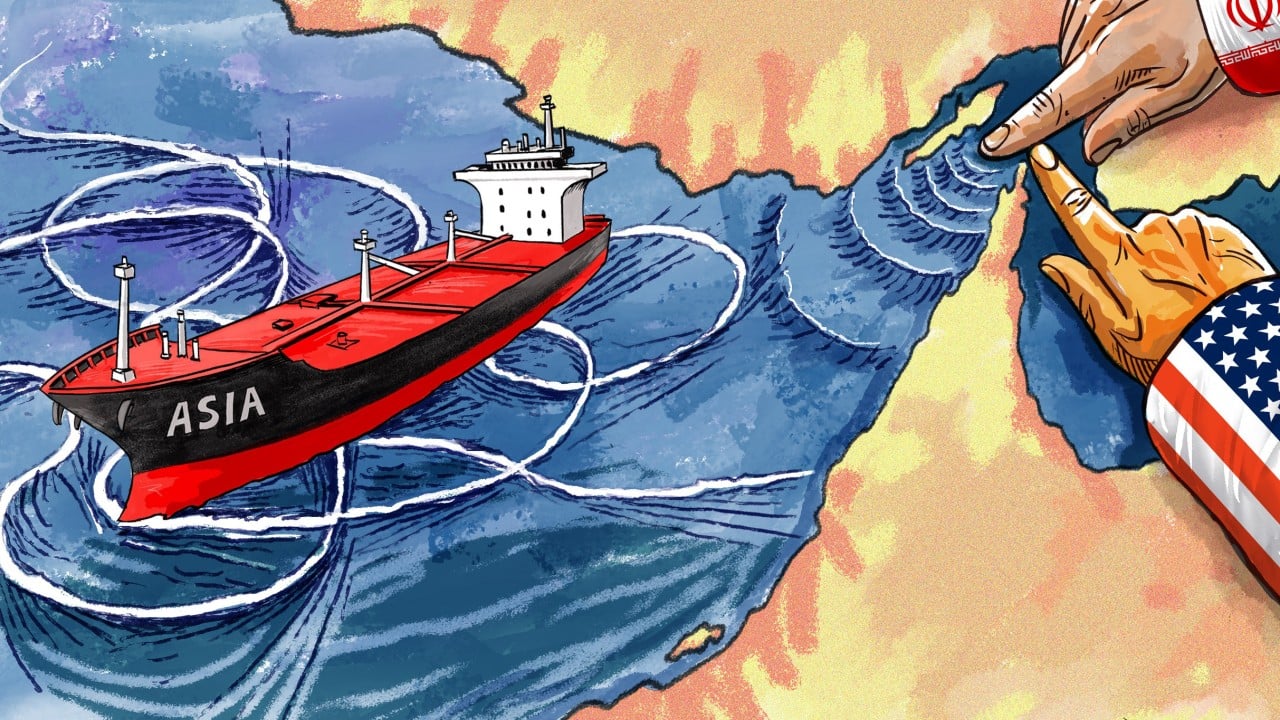

The Sovereign AI and Private Cloud Pivot

OpenAI’s dominance is forcing a secondary market shift that hyperscalers must navigate: the rise of Sovereign AI. National governments and highly regulated industries (defense, healthcare, tier-1 banking) are increasingly wary of sending data to a centralized "black box" model hosted on a public cloud.

This creates a structural requirement for "Air-gapped AI."

- The Edge Computing Shift: As models become more efficient (through quantization and distillation), the need for massive hyperscale clusters for inference may diminish.

- On-Premise Returns: For the first time in a decade, the "Move to Cloud" trend is facing a counter-trend where companies want to run proprietary models on internal hardware to avoid the latency and privacy risks associated with OpenAI’s API.

Logical Failure Points in the Current Bull Case

The assumption that "more AI equals more cloud profit" ignores the marginal utility of model scale. If the jump from GPT-4 to GPT-5 does not yield a proportional increase in enterprise willingness-to-pay, the hyperscalers will find themselves with an oversupply of H100s and no high-margin workloads to run on them.

Furthermore, the Energy Constraint is a hard physical limit that no software optimization can bypass. The cost of power is now a primary driver of data center location and viability. Hyperscalers are no longer just competing on software talent; they are competing for nuclear power contracts and grid priority.

Strategic Recommendation for Infrastructure Allocation

The optimal play for a hyperscaler in the shadow of OpenAI is not "more compute," but Compute Versatility.

- Decouple Infrastructure from Model Dependencies: Prioritize "Software Defined Power" and modular data center designs that can switch between high-density AI training and high-availability general-purpose compute.

- Aggressive Silicon Internalization: Shift the capex mix from third-party GPUs to internal ASICs (Application-Specific Integrated Circuits) to reclaim the 30-40% margin currently captured by hardware vendors.

- The Data Gravity Play: Instead of competing on the model layer (where OpenAI has the lead), hyperscalers should focus on the "Data Fabric." By making it easier to store, clean, and vectorize data within their ecosystem, they ensure that regardless of which model wins, the data (and the associated egress/storage fees) never leaves their cloud.

The current earnings volatility is not a sign of AI failure, but a sign of the Infrastructure-to-Application Phase Shift. The winners will not be those who spend the most on OpenAI’s needs, but those who successfully bridge the gap between "raw compute" and "verified enterprise outcomes."