In a small town in Pennsylvania, the late-shift operator at a water treatment plant noticed something that shouldn’t have been possible. On his monitor, a cursor moved. It wasn’t his hand guiding it. It slid across the screen with a jagged, mechanical precision, hovering over the controls that govern the chemical balance of the town’s drinking water. For a heartbeat, the operator likely felt that cold, primal spike of adrenaline—the realization that the walls of his physical world had been bypassed by a ghost.

This wasn't a scene from a high-budget thriller. It was a Tuesday. If you enjoyed this article, you should look at: this related article.

The "ghost" was a group of hackers linked to the Iranian Government, specifically the Islamic Revolutionary Guard Corps (IRGC). They didn't use a sophisticated, multi-million dollar "zero-day" exploit. They didn't need to. They simply knocked on the digital front door of a programmable logic controller (PLC) and found that the door was not only unlocked but had the factory-default password written on the welcome mat.

We think of cyber warfare as a clash of titans—encrypted satellites, underground bunkers, and glowing green code. The reality is far more mundane and, because of that, far more terrifying. It is the story of a bored technician in Tehran clicking through a list of internet-connected industrial devices and finding a water pump in the American Midwest that still uses "1111" as its passcode. For another look on this story, see the recent update from Gizmodo.

The Invisible Architecture of the Everyday

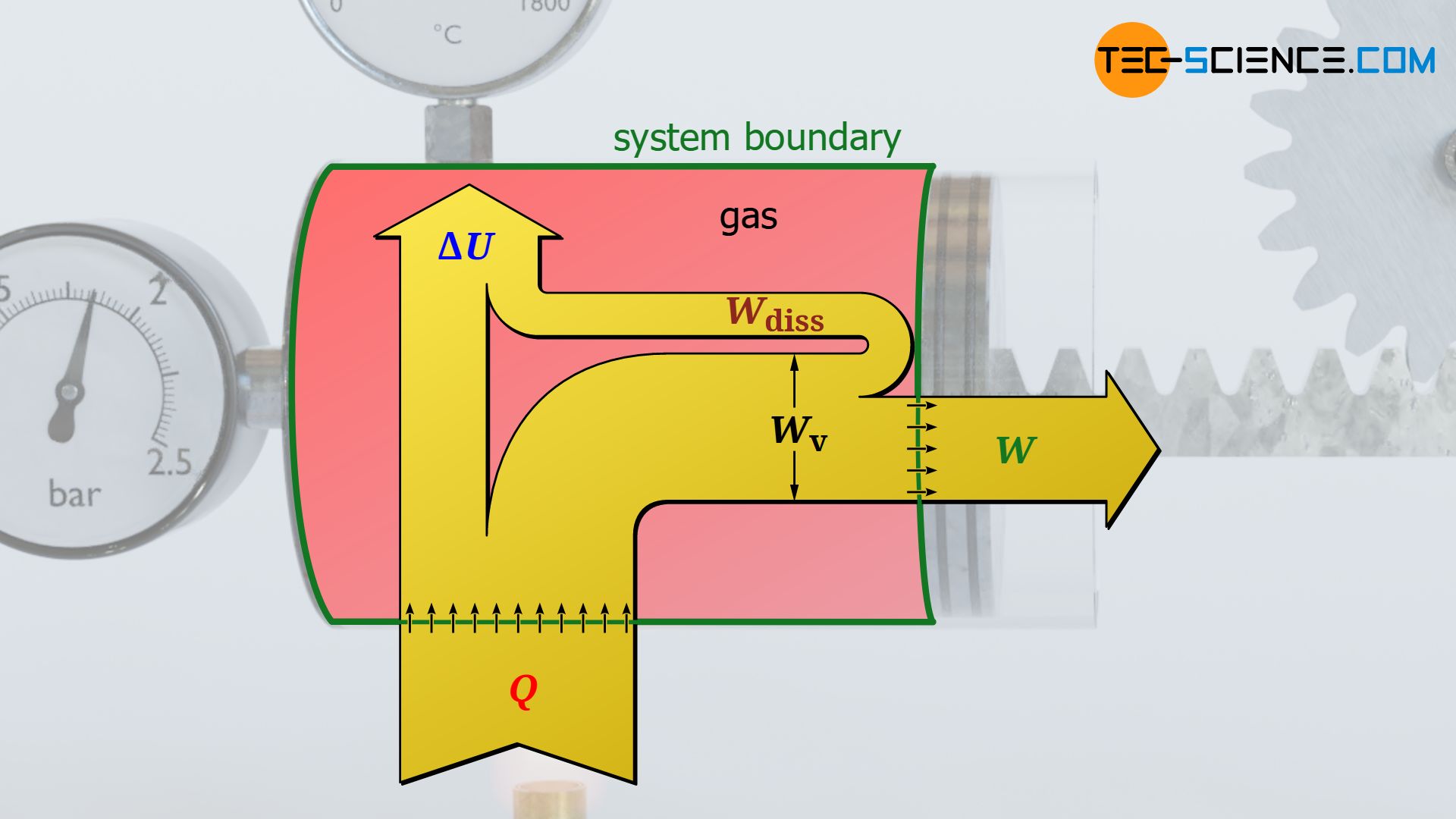

Look around your room. Somewhere nearby, there is a device that bridges the gap between a line of code and a physical action. Maybe it’s the thermostat. Maybe it’s the pressure valve in the gas line three blocks away. These are the nerves of our civilization. We call them Operational Technology (OT).

While your laptop receives constant updates and your smartphone is shielded by biometric locks, the computers that run our power grids and water systems are often relics. They were built for longevity, not security. They were designed to sit in a dusty basement for thirty years and do one thing: keep the pressure at $50 \text{ psi}$.

When these systems were first installed, the idea of connecting them to the "world wide web" was a pipe dream. But then came the push for efficiency. We wanted to monitor the water levels from a tablet at home. We wanted to adjust the power flow without driving two hours into the desert. So, we plugged the old machines into the new internet.

[Image of an industrial programmable logic controller]

The hackers, known in intelligence circles as "CyberAv3ngers," aren't looking for credit card numbers. They are looking for leverage. By targeting a specific brand of Israeli-made tech—Unitronics Vision Series controllers—they turned a regional geopolitical conflict into a kitchen-table anxiety for families in Aliquippa, Pennsylvania. They didn't have to poison the water. They just had to prove that they could.

The Anatomy of a Breach

Consider a hypothetical operator named Sarah. Sarah runs a small municipal utility. She is overworked, underfunded, and her primary concern is a literal leak in a pipe on 5th Street. Security is something she assumes the "IT guys" handle, but the IT guys are busy fixing a printer in the Mayor's office.

The Iranian hackers use automated scanners. These are digital bloodhounds that roam the internet 24 hours a day, sniffing for the unique "fingerprint" of industrial hardware. When the scanner finds a Unitronics PLC, it reports back.

The hackers then try the most common passwords. It takes seconds. Once they are in, the screen on Sarah’s controller changes. It no longer displays the water pressure. Instead, it shows a message: "You have been hacked, down with Israel. Every equipment made in Israel is CyberAv3ngers legal target."

The machine is now a brick. The pumps stop. The alarms scream.

This isn't a theory. The Cybersecurity and Infrastructure Security Agency (CISA), along with the FBI and the NSA, recently issued a joint advisory because this exact scenario is playing out across multiple states. It has hit water districts, healthcare facilities, and food producers. The hackers aren't discriminating based on the size of the target. They are fishing with a massive net, and our critical infrastructure is swimming right into it.

The Psychological Front

Why water? Why now?

The goal of a state-sponsored hack against a small-town utility is rarely total destruction. If Iran wanted to cripple the United States, they wouldn't start with a water tower in a town of 9,000 people. The intent is "cognitive effect." It is the slow, corrosive realization that the basic certainties of life—that the lights turn on, that the water is safe, that the gas heater won't explode—are contingent on the whims of a teenager in a dark room six thousand miles away.

It is a form of shadow-boxing. By hitting these "soft targets," the IRGC sends a message to the U.S. government: We are inside your house. We are touching your thermostat. We are watching your children drink.

The stakes are invisible until they are absolute. We don't notice the "cyber" part of the war until it becomes a "physical" problem. When the water stops flowing, it’s not a data breach. It’s a crisis. When the cooling system in a hospital’s pharmacy fails, it’s not a glitch. It’s a threat to life.

The Cost of Convenience

We are currently paying a "security debt" that has been accumulating for two decades. Every time a company chose a cheaper, unmanaged switch over a secure one, the debt grew. Every time a manager decided that changing passwords every 90 days was "too much of a hassle" for the field crews, the interest accrued.

Now, the bill is coming due.

Federal agencies are begging local utilities to do the basics. They are asking them to take their controllers off the public internet. They are pleading with them to use multi-factor authentication—that annoying second step where you have to type in a code from your phone.

But for many small towns, the resources aren't there. There is no "Cyber Security Department" in a township with a three-person maintenance crew. They are the front lines of a global conflict, and they are armed with nothing but a dial-up connection and a prayer.

The vulnerability is systemic. It’s not just one brand of controller or one specific country. Today it is Iran and Unitronics. Tomorrow it could be another nation-state targeting the software that manages our traffic lights or the sensors that monitor our bridges. We have built a world where everything is connected, which means everything is vulnerable.

The Human Error at the Heart of the Machine

There is a tendency to blame the technology. We want to believe there is a "patch" or a "software update" that will make us safe. But the most sophisticated firewall in the world is useless if a human being leaves the back door propped open for some fresh air.

The Iranian hackers didn't "break" into these systems in the traditional sense. They walked in. They used the credentials provided by the manufacturer. They exploited the most human of all traits: the desire for things to just work without extra steps.

We are living through a period of profound asymmetrical warfare. A small team of hackers with a modest budget can cause millions of dollars in damage and widespread panic by exploiting the simple laziness of a default setting. They don't need a Navy. They need an internet connection and a list of common passwords.

The response from the U.S. government has been a flurry of warnings and "Best Practices" documents. But documents don't change passwords. People do. The struggle isn't between American code and Iranian code. It’s a struggle of discipline.

The Quiet After the Breach

When the hackers are finally kicked out of a system, there is no explosion. There is no smoke. A technician sits down, wipes the drive, changes the password to something complex, and the pump starts whirring again. The water pressure stabilizes. The "ghost" is gone.

But the ghost leaves a lingering chill.

The operator in Pennsylvania still has to look at that monitor every night. Every time the cursor flickers or a sensor reads a little high, there is that split-second doubt. Is it a mechanical fluke, or is someone else in the room?

The invisible stakes of this conflict aren't just about the hardware. They are about the trust we place in the world around us. We have spent a century building a civilization that works so well we have forgotten how it functions. We take the "magic" of the faucet for granted.

Now, we are being forced to remember. We are being forced to realize that the thin blue line between us and a public health disaster isn't a concrete wall or a guarded gate. It’s a line of code, a secure password, and a tired operator watching a screen in the middle of the night, hoping the cursor doesn't start moving on its own again.

The true frontline of modern war isn't a trench in a faraway field. It’s the water meter in your basement and the silent, digital pulse of the city sleeping outside your window.