Anthropic has fast-tracked the release of its new specialized cybersecurity model, a move that appears less like a scheduled product launch and more like a desperate defensive crouch. The rollout comes immediately after reports surfaced regarding a leak of internal source code, an event that should rattle the bones of any company claiming to build "safe" and "steerable" artificial intelligence. By shipping a version of Claude specifically tuned for vulnerability detection and offensive security analysis, Anthropic is attempting to seize the narrative. They want the public to see a hero providing tools to defenders. The reality is more complex. They are a company whose own house is on fire, selling fire extinguishers to the neighbors while the smoke still rises from their own server rooms.

The leaked code, while reportedly not including the weights of the crown-jewel models themselves, represents a catastrophic failure of internal hygiene. For an organization founded by OpenAI defectors on the bedrock of "safety," losing control of your architectural blueprints is a cardinal sin. This isn't just about losing intellectual property. It is about providing a roadmap for bad actors to find the cracks in the armor. When you lose the source, you lose the benefit of the doubt.

The Panic Behind the New Cyber Weights

The timing of this release suggests a frantic internal pivot. Industry insiders know that specialized fine-tuning for cybersecurity isn't something you just "do" over a weekend, but the decision to make it public now is a calculated PR maneuver. Anthropic is effectively trying to prove that their models can be used to patch the very types of holes that led to their own data spill.

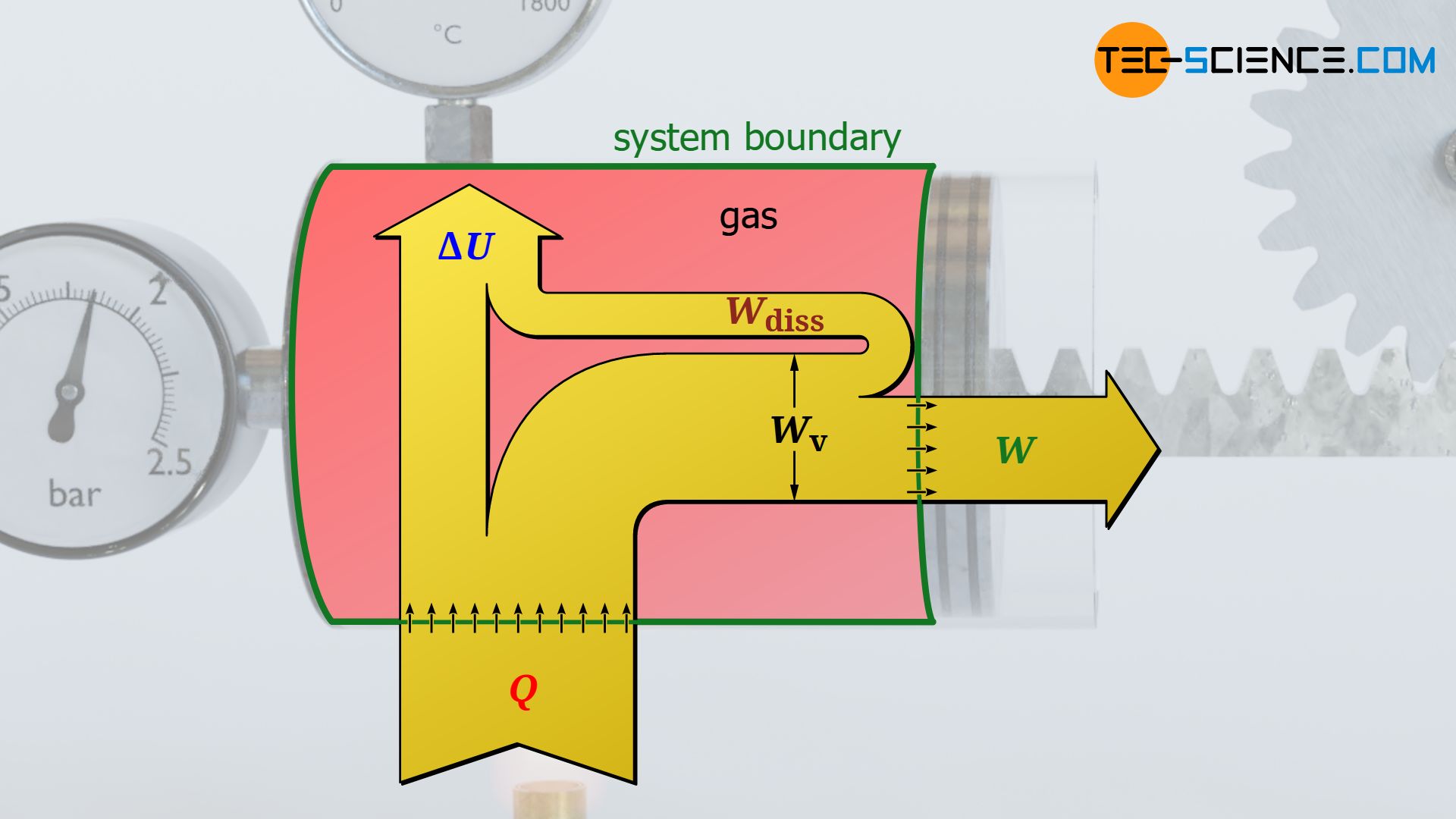

This new model is designed to assist in "red teaming"—the process of attacking a system to find its weaknesses. It can analyze thousands of lines of C++ or Python to find buffer overflows or logic flaws that a human might miss after ten hours of staring at a screen. But there is a glaring contradiction here. If the model is good enough to find these bugs for the "good guys," it is inherently powerful enough to weaponize them for the "bad guys." Anthropic claims to have "safety guardrails" in place to prevent the model from being used to create malware, but those guardrails are historically porous.

History shows that every time a model is released with "safety filters," the community finds a bypass within forty-eight hours. We have seen it with jailbreaks, and we will see it here. By releasing a cyber-specific model, Anthropic has essentially handed the world a dual-use weapon and asked everyone to promise they will only use it for target practice.

Why Source Code Leaks Are Lethal for AI Labs

When a traditional software company like Microsoft or Adobe loses source code, it’s a headache. When an AI lab loses it, it’s an existential threat. The architecture of these models is where the secret sauce lives. Understanding how Anthropic handles "Constitutional AI"—their method of training a model to follow a set of rules—allows a sophisticated attacker to reverse-engineer the "thou shalt nots."

If an attacker knows the specific prompts or reward functions used to train Claude, they can craft inputs that dance right on the edge of those boundaries. It is like having the blueprints to a bank vault. Even if you don’t have the combination, you know exactly where the drywall is thinnest and which sensors can be blinded with a laser pointer.

The Myth of AI Safety Under Pressure

Anthropic’s core identity is built on the idea of being the "adults in the room." They preach a gospel of caution. Yet, when faced with a security lapse, they defaulted to the oldest trick in the Silicon Valley playbook: distracting the press with a new shiny object.

This move signals a shift in the industry's power dynamics. We are moving away from the era of "General Purpose AI" and into the era of specialized, high-stakes tooling. The problem is that the security infrastructure at these labs hasn't kept pace with the capabilities of the models they are building. They are building nuclear reactors in wooden sheds.

The Double Edged Sword of Vulnerability Discovery

Let’s look at the mechanics of what Anthropic has actually released. The model is supposedly restricted to "vetted" partners, a term that is notoriously slippery in the tech world. These partners will use Claude to scan their repositories for "zero-day" vulnerabilities—bugs that the developer doesn't know about yet.

- The Pro: Companies can fix bugs before they are exploited.

- The Con: An automated bug-finder accelerates the "exploit cycle" to a speed that human defenders cannot match.

If a specialized Claude model can find ten vulnerabilities in a banking app in three seconds, the security team now has ten fires to put out. If the "vetting" process fails even once, an attacker has a tool that can generate a year's worth of cyberattacks in a single afternoon. This isn't just theory. We have already seen how basic versions of these models can be used to write convincing phishing emails or obfuscate malicious code. A version trained for the task is a different beast entirely.

The Investor Narrative vs. Technical Reality

The venture capital world loves a "recovery" story. By pushing out this cyber model, Anthropic is signaling to its backers—including giants like Google and Amazon—that they are still in control. They are trying to show that their pipeline is so deep that even a source code leak is just a minor speed bump.

But don't be fooled by the high-gloss press releases. The technical reality is that Anthropic is now fighting a two-front war. On one side, they are trying to maintain their lead in the "reasoning" race against OpenAI’s o1 and Google’s Gemini. On the other, they are now forced to play a high-stakes game of whack-a-mole with their own security.

Broken Trust Cannot Be Patched

Once source code is out, it is out forever. You cannot "un-leak" a repository. The bits are sitting on a server somewhere in a jurisdiction that doesn't care about US copyright law. Every subsequent model Anthropic builds will be scrutinized by attackers who now have a much better understanding of the foundation those models are built upon.

The release of the cyber AI model feels like an attempt to build a moat after the castle has already been breached. It’s a tactical move in a strategic vacuum. If Anthropic wants to regain its status as the leader in AI safety, it needs to stop talking about "constitutionality" and start practicing basic operational security.

The Looming Regulation Shadow

This incident will almost certainly trigger a response from regulators. We are currently seeing a global debate over "open weights" versus "closed models." Anthropic has always been a staunch defender of the closed-model approach, arguing that the risks of misuse are too high to let the public have the underlying files.

By losing their source code, they have accidentally created a "middle ground" that is the worst of both worlds. The code is out there for the bad actors, but the good guys don't have the full transparency that comes with a truly open-source project. It is a disaster of the highest order, wrapped in a "product launch" ribbon.

The Cost of Moving Fast and Breaking Things

The "Move Fast and Break Things" mantra was supposed to be dead in the AI era. We were told this was too important, too dangerous for the old Zuckerberg-era recklessness. Yet, here we are. A leak, followed by a rush to market with a high-risk tool.

Anthropic's new model might help some companies find a few more bugs in their software. It might even prevent a few hacks. But it will not fix the fundamental breach of trust that occurred when their internal files hit the open market. You cannot automate integrity.

The industry needs to stop treating AI safety as a marketing department and start treating it as a hard engineering discipline. That means locking down the environments where these models are born. It means acknowledging that a "safe" model is useless if the company building it can't keep its own doors locked.

If you are a CISO considering using Anthropic’s new cyber tools, you have to ask yourself a hard question. Why would you trust a security tool from a company that just failed its own most basic security test? The irony is thick enough to choke on. Anthropic is offering to help you secure your future while they are still struggling to secure their past.

Clean up your own house before you tell others how to sweep their floors.